Agent Performance

Agent Performance

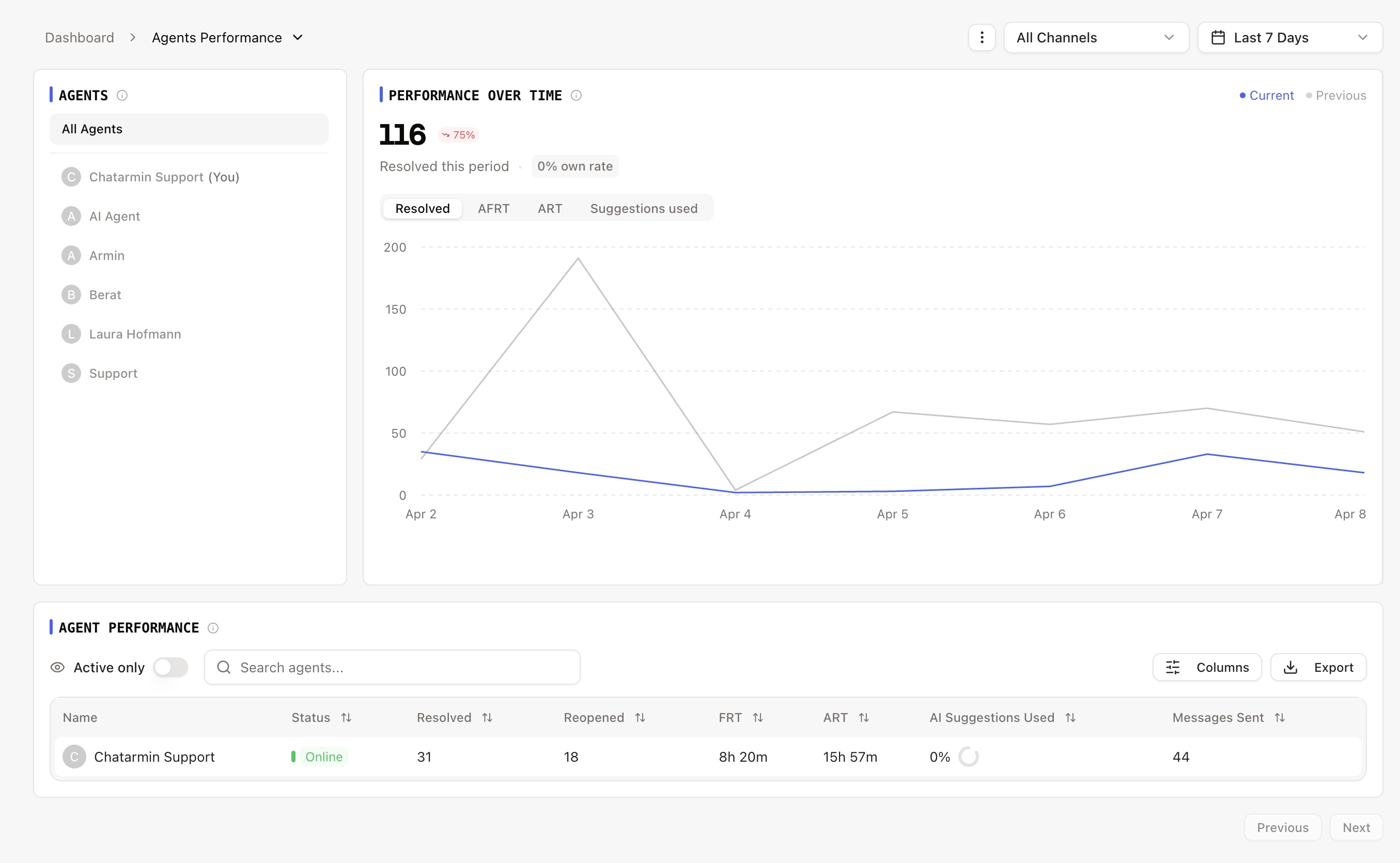

The Agent Performance page tracks individual and team-level agent productivity, response times, and resolution metrics. It provides a per-agent breakdown so you can identify top performers, spot bottlenecks, and ensure workload is distributed effectively.

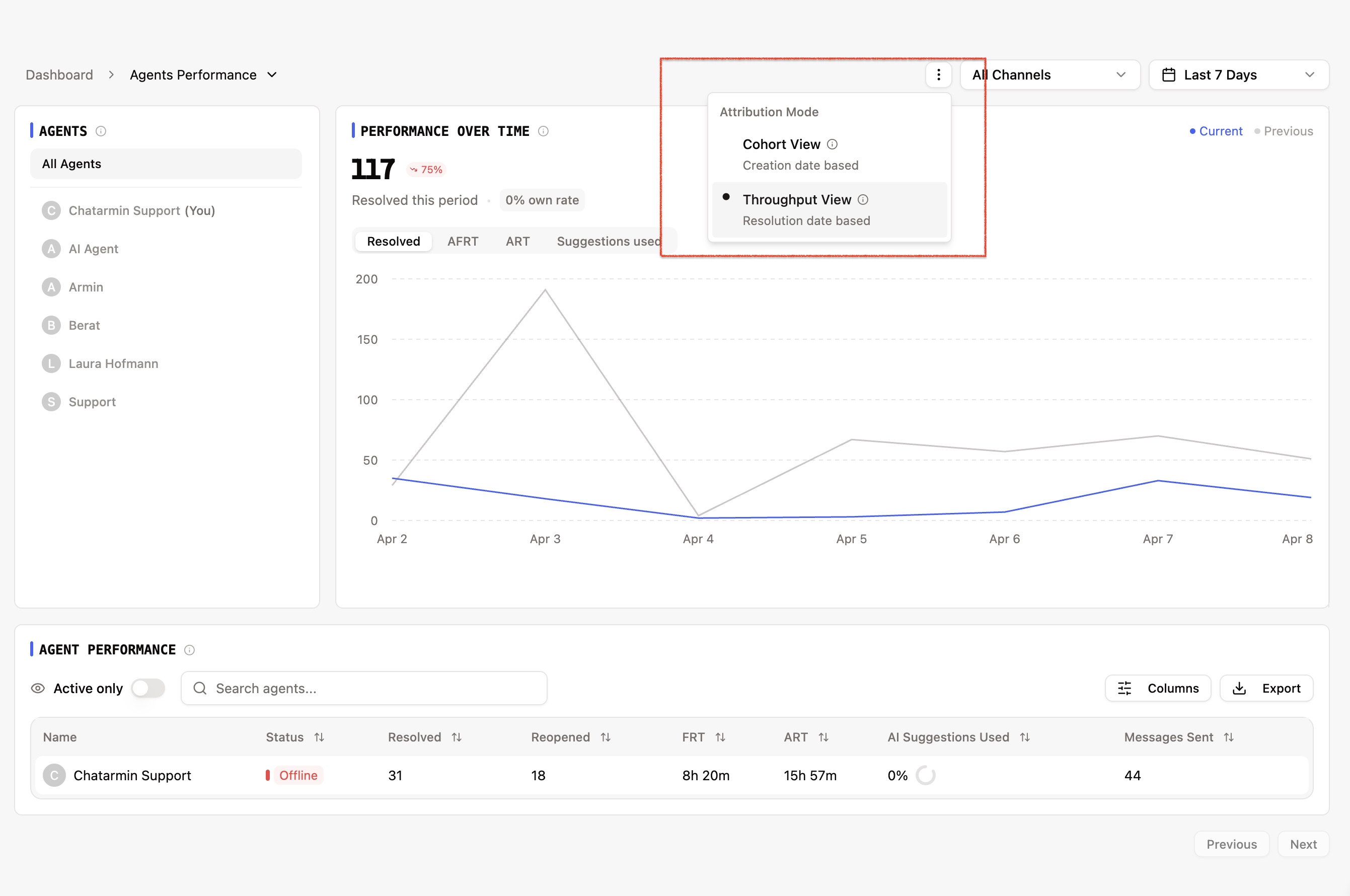

This page features two attribution modes that let you switch between different perspectives on your team's performance.

Attribution Modes: Cohort vs. Throughput

At the top-right of the page, you'll find a mode toggle that changes how tickets are attributed to agents. The default mode is Throughput.

Cohort Mode

Cohort mode answers the question: "How did agents handle the tickets that came in during this period?"

Scope: Tickets created during the selected date range

Assigned: Tickets assigned to the agent that were created in the period

Resolved: Of those assigned tickets, how many are now resolved

Use case: Understanding how your team handled a specific cohort of incoming work -- for example, "Of the tickets that arrived last week, how many has each agent resolved?"

Throughput Mode (Default)

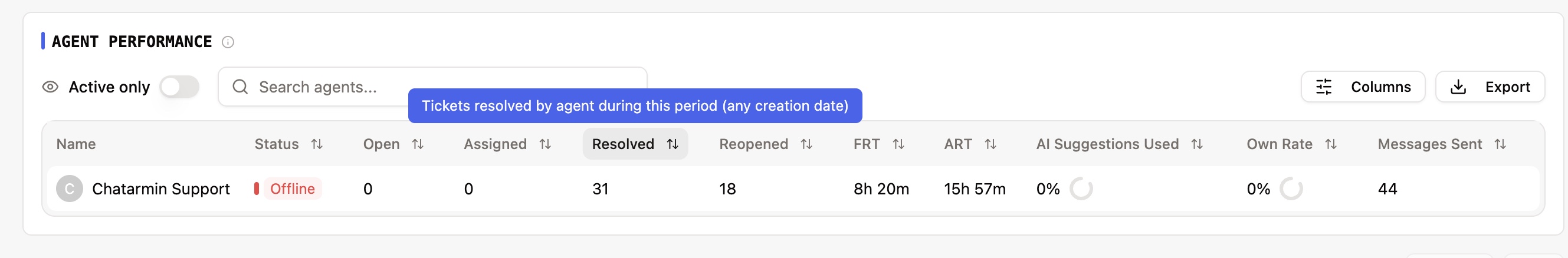

Throughput mode answers the question: "What did each agent actually accomplish during this period?"

Scope: Tickets resolved during the selected date range, regardless of when they were created

Assigned: This column is hidden in throughput mode (since it's not relevant to output-based measurement)

Resolved: Tickets resolved by the agent in the period, including tickets that may have been created days or weeks ago

Use case: Measuring actual output and productivity -- for example, "How many tickets did each agent close this week?"

How each metric changes by mode:

Resolved: Cohort = tickets created in period that are now resolved. Throughput = tickets resolved in period (any creation date).

Assigned: Cohort = tickets created and assigned in period. Throughput = hidden.

Reopened: Cohort = reopened tickets from those created in the period. Throughput = reopened tickets from those resolved in the period.

Avg FRT: Same in both modes -- based on tickets created in the period where the agent was the first responder.

Avg ART: Cohort = resolution time for assigned tickets that are resolved. Throughput = resolution time for tickets resolved in the period.

AI Drafts Used: Cohort = suggestion rate for resolved tickets created in the period. Throughput = suggestion rate for tickets resolved in the period.

Summary Cards

At the top of the page, you'll see summary metrics for the selected agent(s) and period:

Resolved: Total tickets resolved, with a breakdown of Own (agent resolved tickets assigned to them) and Helped (agent resolved tickets assigned to someone else). Includes a trend comparison against the previous period.

Avg FRT: Average first response time across the selected scope. Trend shows whether response times improved or worsened.

Avg ART: Average resolution time across the selected scope.

AI Drafts Usage Rate: Percentage of AI suggestions that agents accepted and used, calculated as (suggestions used / suggestions offered) x 100.

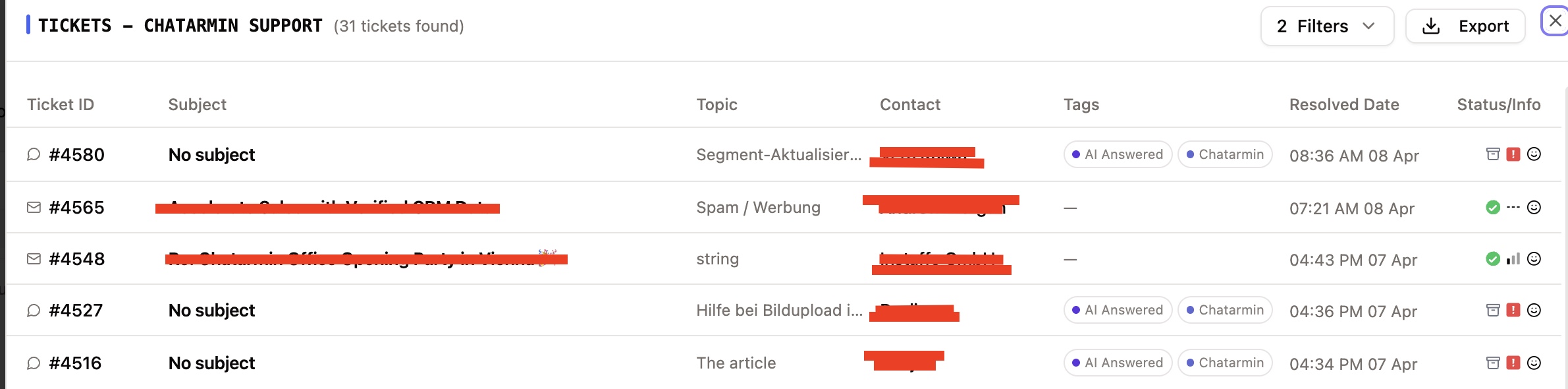

Agent Table

The main table lists each agent with their individual performance metrics:

Agent: Agent name and current online status indicator (online, offline, busy, or idle)

Open: Number of currently open tickets assigned to this agent (real-time, not affected by the date range)

Assigned: Number of tickets assigned to the agent during the period (Cohort mode only -- hidden in Throughput mode)

Resolved: Tickets resolved by this agent. Hover to see the breakdown:

Own: Tickets where the agent was both assigned and the resolver

Helped: Tickets where the agent resolved a ticket assigned to another agent

Reopened: Number of tickets that were reopened after this agent resolved them

Avg FRT: Average first response time for tickets where this agent was the first human to respond

Avg ART: Average time from ticket creation to resolution for tickets resolved by this agent (excluding customer wait time)

AI Drafts Used: Percentage of AI-generated draft suggestions that the agent accepted, shown as a percentage with the raw count (e.g., "40% (20/50)") on hover

Messages Sent: Total outbound messages sent by this agent during the period

Optional columns (can be toggled via column settings):

Own Rate: Percentage of the agent's assigned tickets that they resolved themselves (vs. being resolved by another agent or the system)

Important notes:

FRT per agent may differ from the global FRT average because it only includes tickets where that specific agent was the first responder.

ART per agent may differ from the global ART average because it only includes tickets resolved by that specific agent.

Resolved attribution is based on the

solved_byfield -- the agent who actually clicked "Resolve" or "Send + Resolve." If a ticket is resolved by a workflow or auto-resolve rule, it is not attributed to any agent.Clicking on any agent row opens a ticket drilldown (see below).

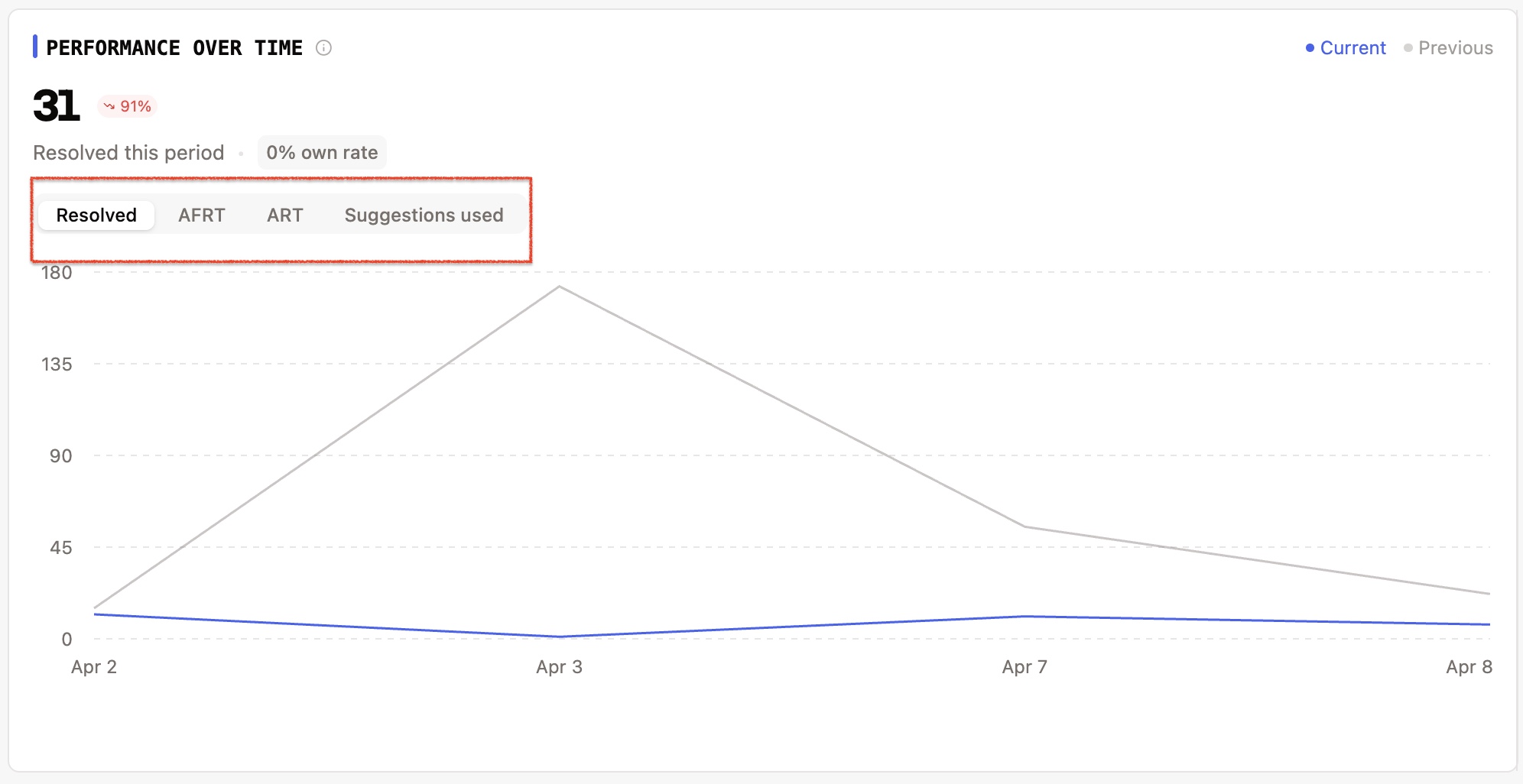

Performance Chart

Below the summary cards, a line chart tracks four metrics over time with current vs. previous period comparison:

Resolved -- Count of tickets resolved per time interval, with own/helped breakdown and own rate

Avg FRT -- Average first response time per interval (displayed in hours)

Avg ART -- Average resolution time per interval (displayed in hours)

Suggestions Used -- Count of AI suggestions used per interval, with usage rate

Switch between metrics using the tabs above the chart. Each tab shows:

The total value for the period

A trend indicator comparing against the previous period

A line chart with the current period (solid) and previous period (dotted) plotted together

CSAT Panel

On the right side of the page, a collapsible CSAT (Customer Satisfaction) panel shows survey results for the selected agent(s):

Average Score: The mean CSAT rating on a 1-5 scale, displayed with stars

Rating Distribution: A breakdown of responses per rating (1 through 5), showing count and percentage with visual progress bars

Ratings are labeled with descriptive icons (e.g., "Very Unsatisfied" through "Very Satisfied")

Survey Statistics:

Sent: Total number of surveys sent

Responded: Number of surveys that received a response

Pending: Surveys sent but not yet answered

Response Rate: Percentage of surveys that received a response

When a specific agent is selected, the CSAT panel shows only that agent's survey results. When no agent is selected, it shows the organization-wide results.

The panel auto-collapses when there are no survey responses to display.

How CSAT attribution works per mode:

Cohort: Shows survey results for tickets created in the selected period

Throughput: Shows survey results for surveys sent in the selected period (regardless of when the ticket was created)

Agent Drilldown

Clicking on any agent row in the table opens a ticket list panel showing the individual tickets associated with that agent. The tickets shown depend on the active attribution mode:

Cohort mode: Displays tickets assigned to the agent that were created during the selected period

Throughput mode: Displays tickets resolved by the agent during the selected period

From the drilldown, you can click on any ticket to navigate directly to it in the inbox.

Agent Filter (Sidebar)

On the left side, you can select a specific agent to filter the entire page to that agent's data. When an agent is selected:

Summary cards show only that agent's metrics

The chart shows only that agent's trend data

The CSAT panel shows only that agent's survey results

The table highlights the selected agent

Click on the agent again or use "All Agents" to return to the full team view.

Key Definitions

Own (Resolved Own): Tickets where the resolving agent (

solved_by) is the same as the assigned agent (agent_id). This means the agent resolved a ticket that was assigned to them.Helped (Resolved Helped): Tickets where the resolving agent is different from the assigned agent. This means the agent helped resolve a ticket that was assigned to a colleague.

Own Rate: (Resolved Own / Total Assigned) x 100. The percentage of an agent's assigned tickets that they personally resolved.

AI Drafts Used Rate: (AI Suggestions Used / AI Suggestions Offered) x 100. How frequently the agent adopts AI-generated draft suggestions.

First Responder: The human agent who sent the first reply on a ticket. Auto-replies, AI messages, and workflow messages do not count.

Solved By: The agent who performed the resolution action (clicked "Resolve" or "Send + Resolve"). Tickets resolved by automation are not attributed to any agent.